Introduction

Service mesh is an infrastructure layer built on top of a microservice architecture to provide observability, security, and reliability to applications. It ensures that the communication across containers or pods is secure, fast, and encrypted. Though the communication problems were a by-product of moving away from a monolith architecture, the benefits surpass the challenges to a great extent. A Service mesh can achieve this by working on a platform layer, rather than an application layer.

Recap Kubernetes and its components.

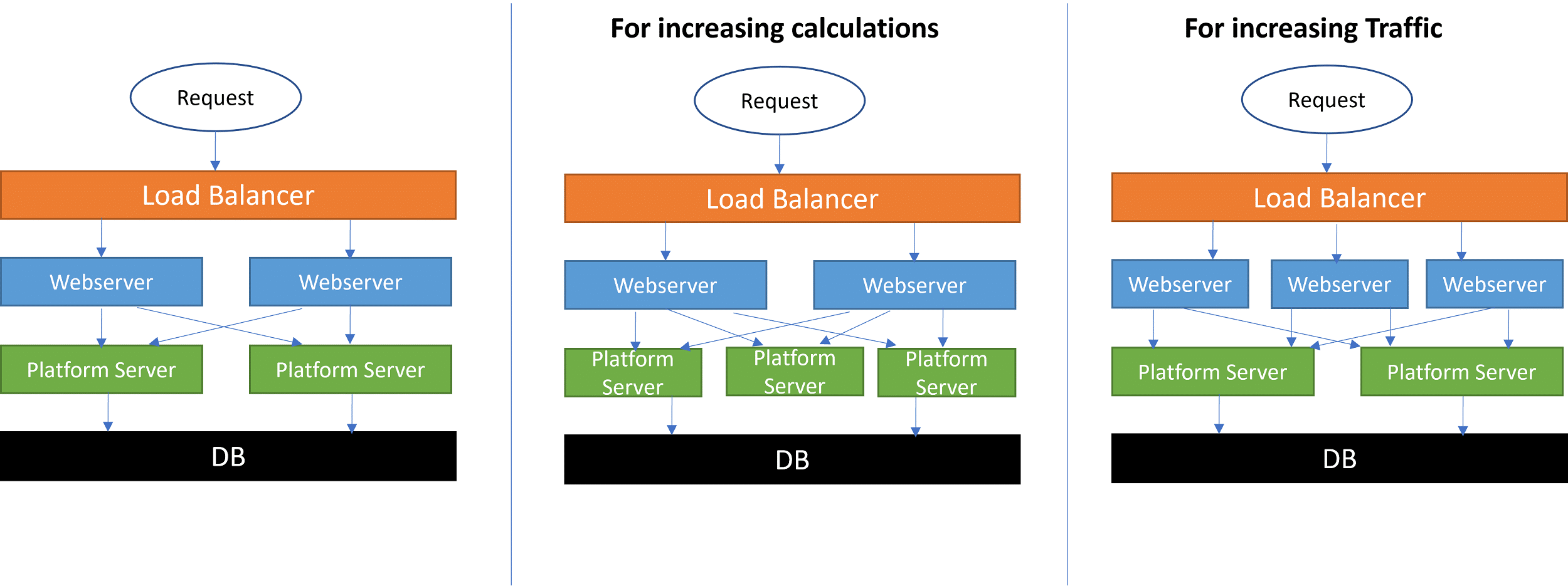

By making the service mesh run on a platform layer, we do not put additional resource utilization on the application’s core function.

To understand this, let us take an example where we are running a website that queries data for the user. If both of these functions were on the webserver, then a calculation could slow the response time of the web pages. Having it on a seperate server avoids this problem.

Why do I need a service mesh?

Service Mesh is an optional package, and integration is not mandatory. But service mesh is an essential management system that helps all the different pods to work in harmony. Here are several reasons why, you will want to implement service mesh in a Kubernetes environment.

One of the most widely used service mesh software is Istio. Read what Istio service mesh is.

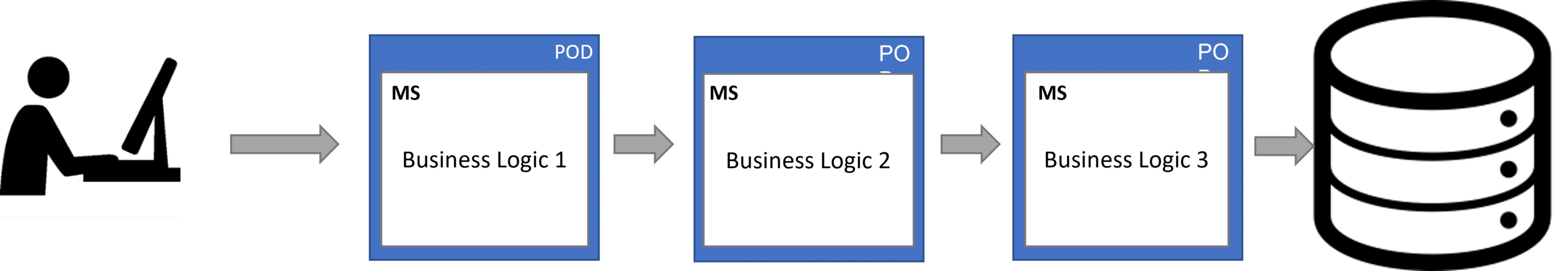

In a typical Kubernetes environment, user requests are fulfilled through a series of steps, where each of the steps is performed by a container or a pod.

Reason 1 :

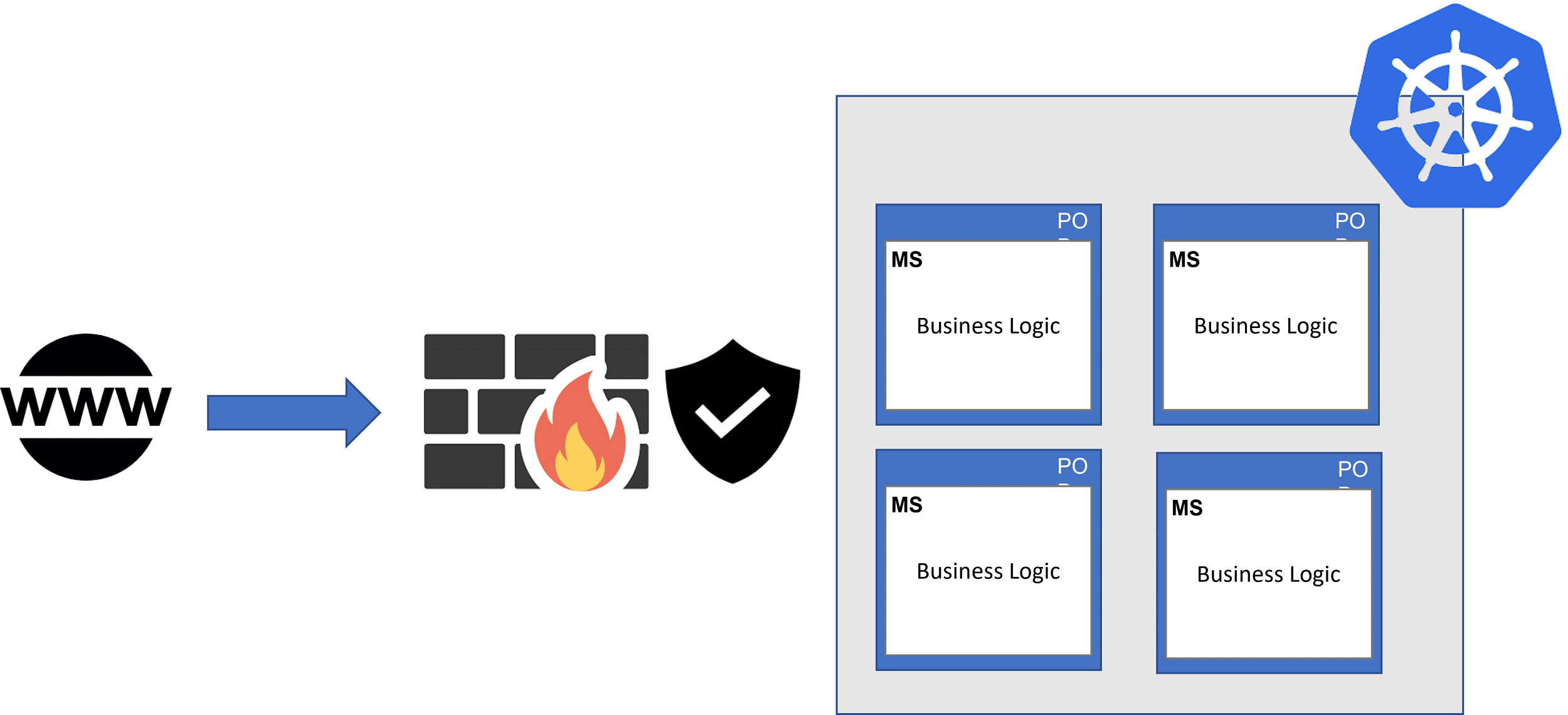

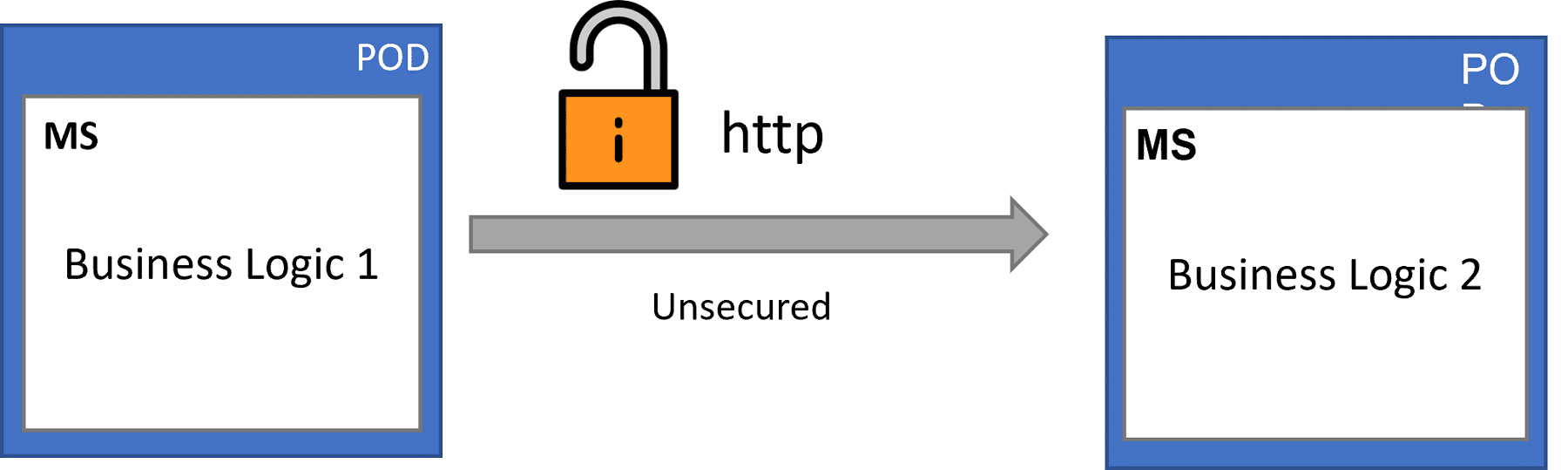

These clusters of pods are managed by Kubernetes and are protected by an external firewall. See picture above. The overall application is protected by a firewall and all requests from the World wide web are encrypted. But inside the kubernetes cluster, the system without a service mesh will communicate with each other in simple plain text.

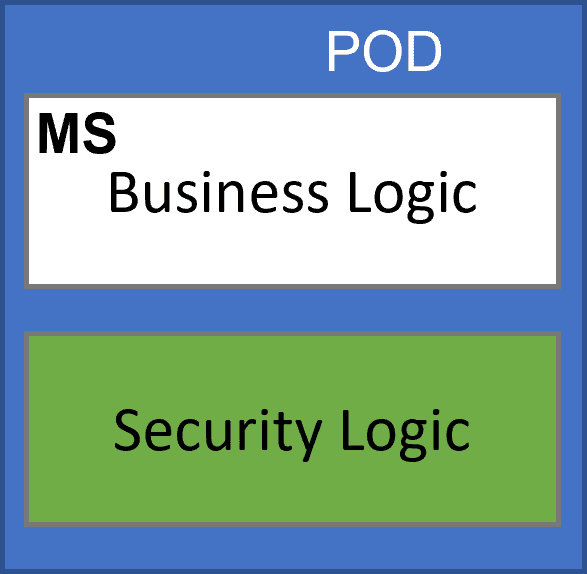

The communications inside the cluster are insecure. This poses a very serious threat to the overall security of the application. Once an attacker is inside the cluster, he will have complete freedom with no hurdles to do anything he wants. Developers can solve this by integrating additional authentication protocols known as security logics. The added security layer needs to be integrated with the business logic making the overall code complex.

Reason 2 :

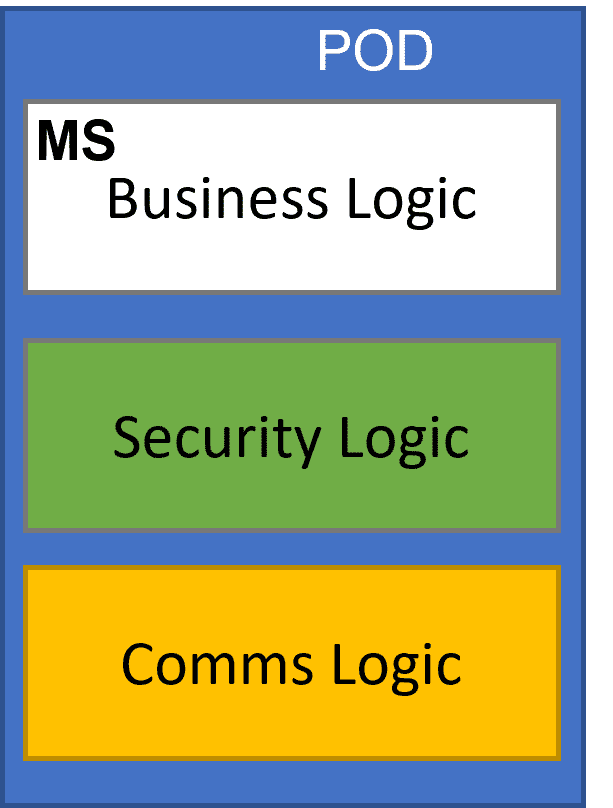

Inside a Kubernetes cluster, these pods can communicate with any other pod inside the cluster. But to ensure fast communications, we need to define communication logic. An additional logic integration further complicates the code. Developers need to manage their core business logic and on top of additional security and communication logic.

Reason 3

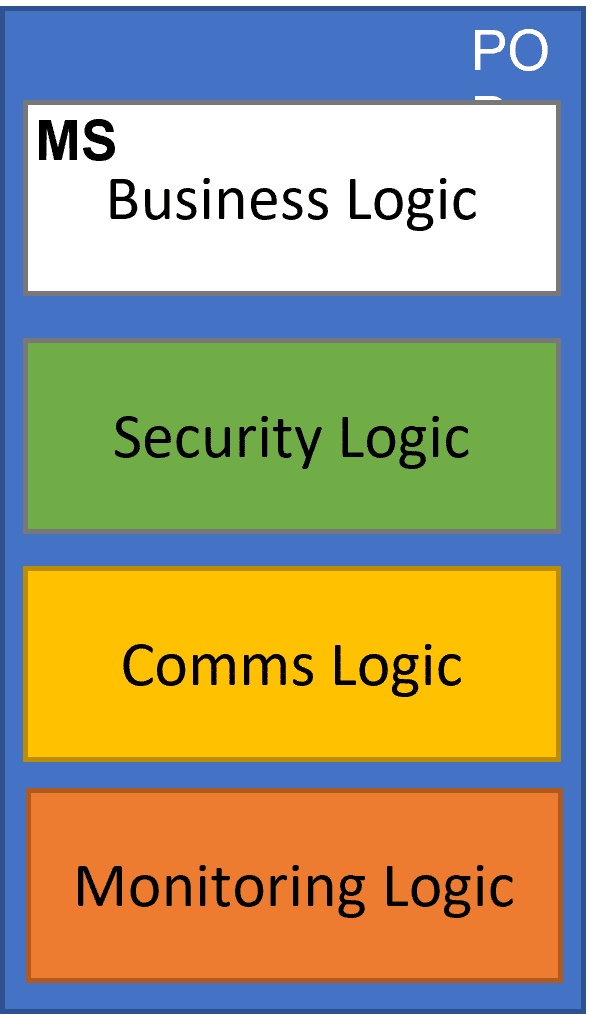

Running a monitoring service is essential, as we need to monitor logs and performance. This is done by implementing monitoring agents or monitoring logic.

Reason 4

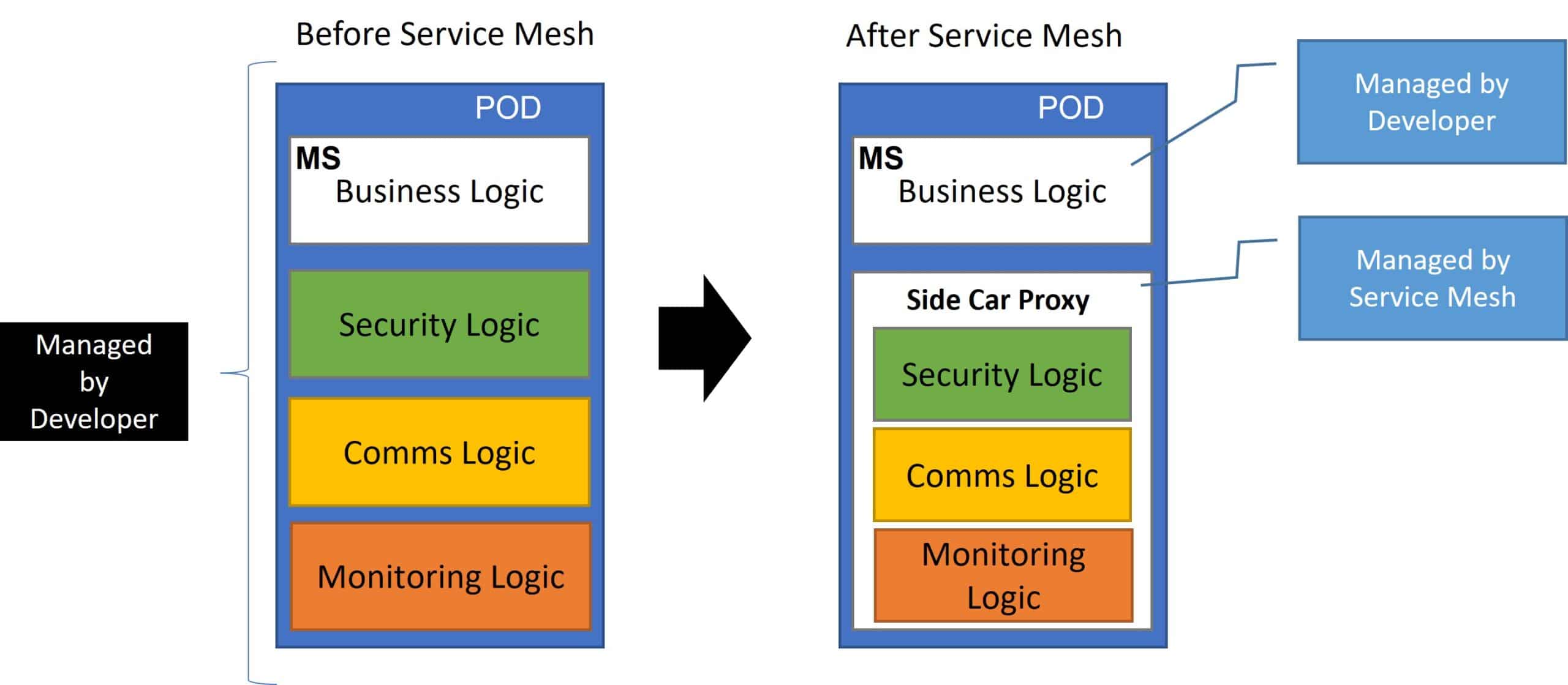

Think of the developer’s plight when he must add each type of logic into each of these containers /pods. This is a very wasteful practice, and developers end up spending more time on writing these logics than on writing the core business logic of the application. Besides taking time, it aggravates the complexity of these microservices. This goes against the vision of a microservice that is simple and lightweight.

How does A Service Mesh Solve this problem?

The solution was pretty simple. Automate all repetitive and static logic tasks. Service mesh groups these tasks into a sidecar application called a sidecar proxy. With the help of a control plane, service mesh can automatically configure and inject these proxies into each pod so that developers can focus on only building business logic. Now the pods can communicate with each other securely through the sidecar proxy injected into each pod.

What more can Service Mesh do for me?

Service mesh can act as a brilliant load balancer and is also one of the core features. It can be easily configured to split traffic. Let us take an example to understand how. Consider you are running an application on multiple clusters. One of your developers has added a new feature to the core application and wants to test it out on production with live traffic. The easiest deployment strategy that he can test out his latest feature is by pushing it into a container or pod inside the cluster and then diverting a portion of the over traffic to collect essential logs and metrics.

The service mesh sidecar is also designed to collect APM and logs that he can use for performing his analysis on the canary instance. These logs and metrics are collected using a tool like Splunk or Datadog. But With the help of OpsMx Autopilot, one can automate the whole process of testing out a deployment using a canary analysis and perform automatic rollbacks whenever necessary.

Conclusion

Service mesh is not an optional feature for consideration for organizations using a microservice architecture. It provides critical observability, reliability, and security features. As this runs on a platform level, it is not a burden on the core business application. It is a dominant feature that alleviates the developers crucial time so that they can focus on value-added activities.

In case you are using Spinnaker or Argo for deploying your microservices, then you can leverage those CD tools with Istio for canary deployments. Reach out to Argo Center of Excellence or Spinnaker Center of Excellence.

But if you are not using a microservice architecture for your infrastructure, we recommend switching to one. The challenges will far outweigh the benefits of using a microservice model.

About OpsMx

Founded with the vision of “delivering software without human intervention,” OpsMx enables customers to transform and automate their software delivery processes. OpsMx builds on open-source Spinnaker and Argo with services and software that helps DevOps teams SHIP BETTER SOFTWARE FASTER.

Service mesh can be defined as a dedicated infrastructure layer for facilitating service-to-service communications between services using proxy. Most of the managed security service providers use this.