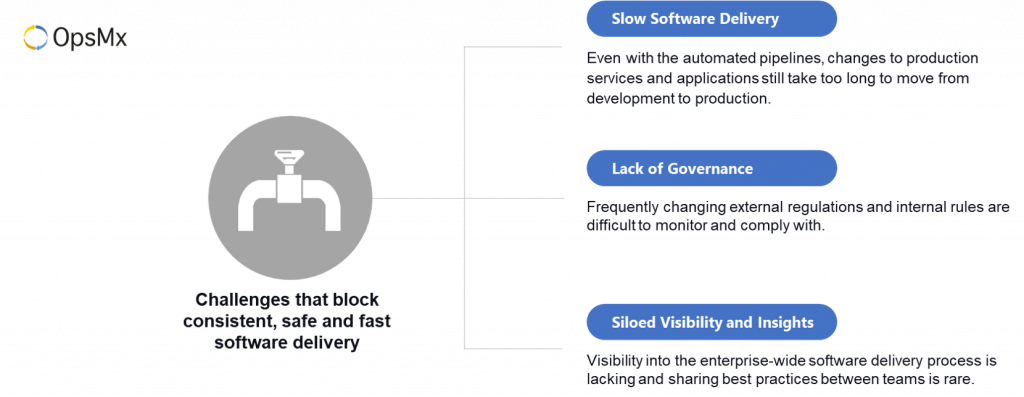

After implementing a CI/CD process, many organizations face three significant challenges that block them from consistently, safely, and quickly delivering software. The three main reasons that slow down a CI/CD pipeline are as follows:

- Slow software delivery: Even with automated pipelines, changes to production services and applications still take too long to move from development to production.

- Lack of Governance: Frequently changing external regulations and internal rules are difficult to monitor and comply with.

- Siloed visibility and insights: Visibility into the enterprise-wide software delivery process is lacking, and sharing best practices between teams is rare.

Challenges to fast software Delivery

To address these problems, you need to add a layer of intelligence that assists throughout your entire CI/CD process across your teams and tools.

This blog, the first of three parts, describes the three main problems and their causes. The second post discusses OpsMx Autopilot, an ML-based solution to these problems. The third post discusses specific customer examples of Autopilot in action.

Challenge 1: Slow software delivery: human interference slows the CI/CD process (manual reviews)

“We implemented a modern CI & CD tool, but our process is still slow and costly.”

The head of DevOps for a large enterprise (Fortune 100), who leads a team of engineers responsible for delivering one of the most popular websites in the world, with more than 200M unique monthly viewers and 300K positive reviews, still struggles with quickly delivering enhancements to customers.

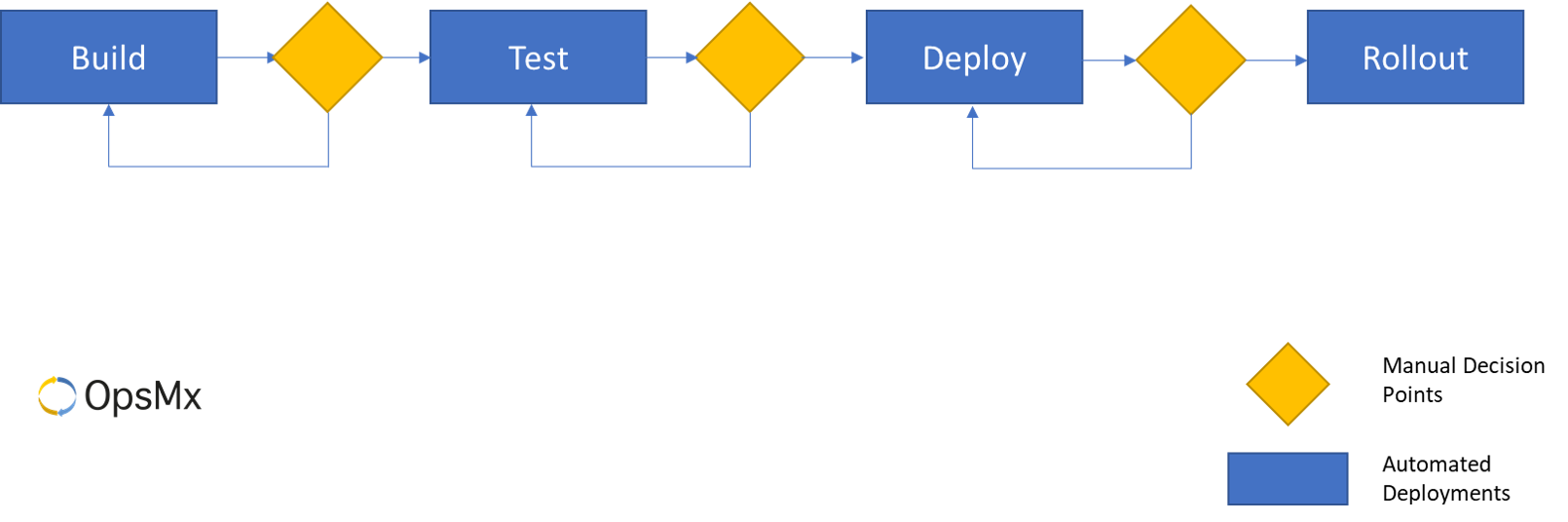

Figure 1

A Simple Software Delivery Pipeline

Consider a simple software delivery pipeline, as shown in figure 1. Even with automated deployments in place, the process stops at specific decision points. These create bottlenecks in the pipeline where the process waits for a decision: whether to proceed or start over on that deployment.

Manual Reviews

At each decision point, the person, or more likely a team of senior engineers, analyze the gathered data to evaluate the risk and compare it to previous similar changes. In addition, they validate that all policies have been followed, and recommend whether to promote it to the next stage in the pipeline. Finally, an authorized decision maker concludes the process and the deployment is promoted or rejected.

More Manual Reviews (sigh!)

The decision process at every stage is (manual) similar in concept but serves a different function, with various experts analyzing the data and deciding whether the updates are qualified to progress to the next stage in the pipeline.

Understanding the Pain-points of Manual Reviews

For example, after the “test stage”, the data coming in are from automated and manual tests, and may include basic system metrics; the decision-maker is probably a QA leader. After the “deploy stage,” data comes from Jira to describe the change, from a performance testing tool, from APM tools, and perhaps other tools. Typically the DevOps or Operations leader evaluates the data and is supported by senior engineers and operations experts.

Within an organization, the process can vary based on the application, the team, the time of year, the importance and scope of the change, and many other factors. However, at each decision point, the risk of a change moving forward is weighed against the benefit of the change. When deploying a small number of changes, this delay is minor, and the process works fine. When you have many changes, the wait is fatal to the release process.

Pain points of Manual Reviews in Enterprises & large Organizations

Even in the best of organizations, the analysis and approval time of a release from development to production can easily consume 3 hours; some approvals can take 12 hours or more. When you have thousands of deployments per month, it is easy to see how the human decision (manual! sigh!) process prevents rapid delivery/ release to customers.

Takeaway…(need for Automated reviews)

Careful and well-informed decisions are critical because errors in a production system harm the company brand. Of course, the cost of production errors vary widely, but outages with critical, customer-facing applications can cost an organization $30,000 per minute or more. The cost of a compliance violation can be even higher.

Challenge 2: Lack of Compliance

Adhering to governance rules is mandatory. Without an intelligent system to automate the process of rule management and enforcement, compliance with current software delivery policies is nearly impossible.

Large organizations can have thousands of policies in place. Some compliance rules vary by geography, by application, or by the team. Some guidelines and regulations frequently change, making compliance difficult.

Types of compliance rules include:

Compliance Rules

- Best practices that are recommended in order to reduce errors or increase cost-efficiency. Examples include guidelines for the correct infrastructure template for a specific application in a specific cloud, or configuration specifications for the versions of system software to deploy.

- Operational constraints such as not being able to deploy during a blackout period.

- Security constraints such as requiring a security scan before deployment to a canary environment or to production, or a limitation on which open source libraries may be used, or policies on who can approve the deployment of builds to production.

- External regulations, such as a separation of duties required by SOX, where the change must be approved by someone other than the person who implemented the change. There are many examples of external regulations.

In addition to following the required policies, organizations must prove that the policies are followed. This forces a requirement of audit in addition to automating the governance rules themselves.

Challenge 3: Siloed Visibility and Insights

In addition to speeding the delivery of each change to each application, a well-run, intelligent continuous delivery solution takes a holistic view. This view examines all teams and all CD tools in use. The solution finds trends in the CD process – both good and bad – and shares best practices across the organization.

This can be done only by looking at the performance of all pipelines and all systems, over time, and across all your applications. Without a system built to provide visibility at the highest level, the organization will optimize at an individual level and will miss opportunities to dramatically improve. The challenge is made more difficult because most organizations will have multiple CD systems in use, and collecting data across all your tools is more difficult.

Any solution should provide a high level view, typically in a dashboard format, that enables the teams to identify specific problems in a given pipeline over time, spot trends across teams and tools, and find best practices to share between teams.

In the past, the scale and speed required for software delivery did not require automation. In today’s constant drive for faster response, a new approach is required – human decision making simply isn’t fast enough.

How to tackle these challenges with Manual Reviews?

You can solve these three issues – slow delivery, inadequate governance, and poor visibility – only through the addition of a layer of intelligence to your CD system. OpsMx Autopilot is a machine-learning-based system that supports any CI/CD platform, provides automated release verification, real-time decisions, governance, compliance, and intelligent visibility.

Benefits of Deployment Firewall for CI/CD

OpsMx’s Deployment Firewall (formerly called Autopilot) adds a layer of security automation to your CI/CD process for the following three benefits:

- Reduce risk and shorten approvals – shorten the data gathering drudgery of the verification process and automate the approval decision when possible.

- Improve governance and compliance – ensure compliance to your corporate and regulatory policies and enable you to audit all the steps in your CI/CD process.

- Generate Insights and visibility – enable the high-level management of the enterprise-wide software delivery process by sharing CI/CD metrics and DevOps best practices among teams.

Please read the blog for an detailed overview of OpsMx Autopilot, a layer of intelligence for software delivery.

(Note: This Former module called Autopilot is now deprecated, and replaced by the Deployment Firewall – a better way to automate verification, approvals and security controls)

About OpsMx

OpsMx is a leading innovator and thought leader in the Secure Continuous Delivery space. Leading technology companies such as Google, Cisco, Western Union, among others rely on OpsMx to ship better software faster.

OpsMx Secure CD is the industry’s first CI/CD solution designed for software supply chain security. With built-in compliance controls, automated security assessment, and policy enforcement, OpsMx Secure CD can help you deliver software quickly without sacrificing security.

OpsMx Deploy Shield adds DevSecOps to your existing CI/CD tools with application security orchestration, correlation, and posture management.

0 Comments

Trackbacks/Pingbacks