MySQL for Orca and Clouddriver on Openshift environment

In this blog, we are going to demonstrate how to configure MySQL as the datastore for Orca and Clouddriver microservices on Openshift environment pods without any downtime. These guidelines are also applicable to any Kubernetes environment.

Spinnaker has been using Redis as the data store for all its microservices.

We have been maintaining several enterprise Spinnaker infrastructure footprints over time exposed to many scaling challenges with Clouddriver and Orca’s original Redis-backed caching implementation. For example, with time, we realized with a large number of applications & pipelines deployed, we need to use a reliable database. Additionally, MySQL knowledge is relatively ubiquitous and checks our core requirements and also has the added benefit of query flexibility.

Goals:

Running MySQL pod on Openshift has its challenges as one needs to run them as a non-root user. We are going to configure two MySQL deployments along with its service and configMap for Clouddriver and Orca.

The clouddriver deployment won’t have any persistence storage for it, but the Orca, which is responsible for maintaining all the activities and history of pipeline execution will be assigned a PV.

Clouddriver would be switched over to MySQL as we don’t have any dependencies on it.

For Orca we would use Dual-Task Repository i.e. all the new executions would be going to MySQL and all the historical executions would be fetched from Redis.MySQL 5.7 has been tested with Orca and Clouddriver.

Openshift Image: “ docker pull registry.access.redhat.com/rhscl/mysql-57-rhel7”

Kubernetes Image: “docker pull mysql:5.7”

These are the steps that we would be following in the blog:

Step 1: Configure MySQL deployment with service, Config Map & PVC for clouddriver and Orca (PVC would be configured only for orca for data persistence).

Step 2: Configure the Database and users for cloud driver and orca respectively.

Step 3: Configure the cloud driver-local.yml and orca-local.yml with the updated MySQL settings and do a “hal deploy apply” with the service names.

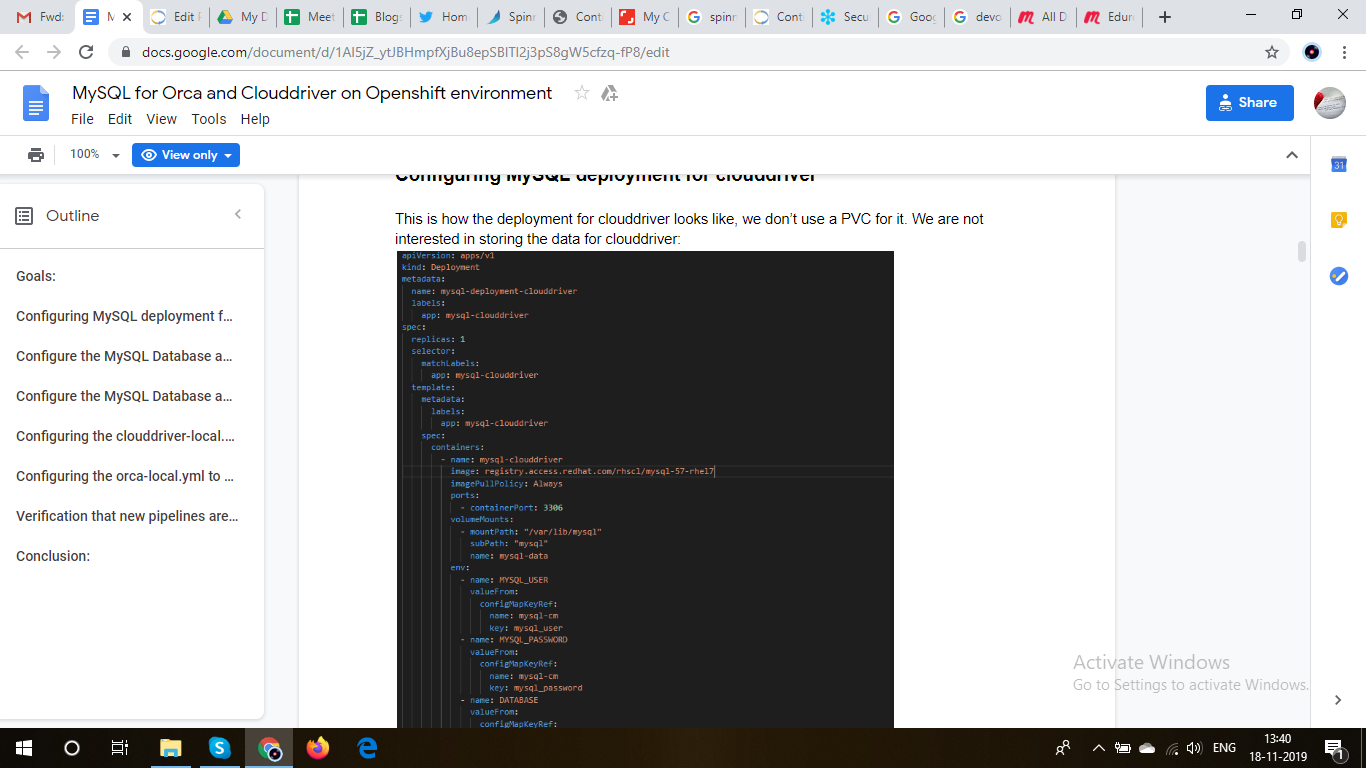

Configuring MySQL deployment for clouddriver

This is how the deployment for clouddriver looks like, we don’t use a PVC for it. We are not interested in storing the data for clouddriver:

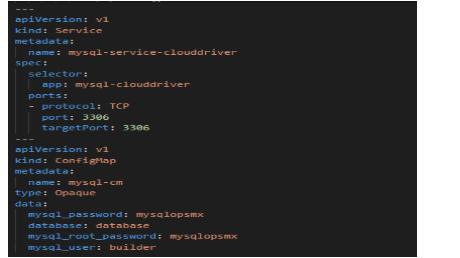

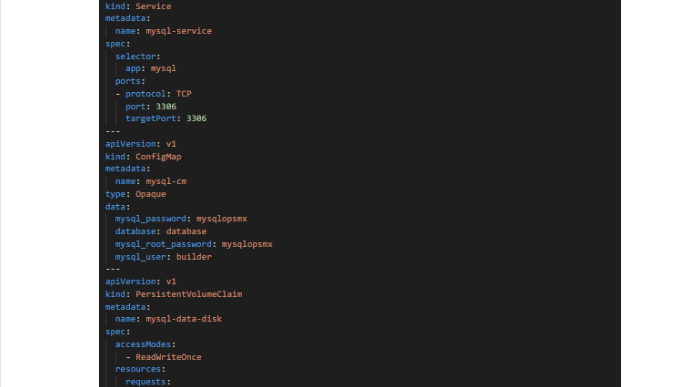

The service and Config Map would look like this:

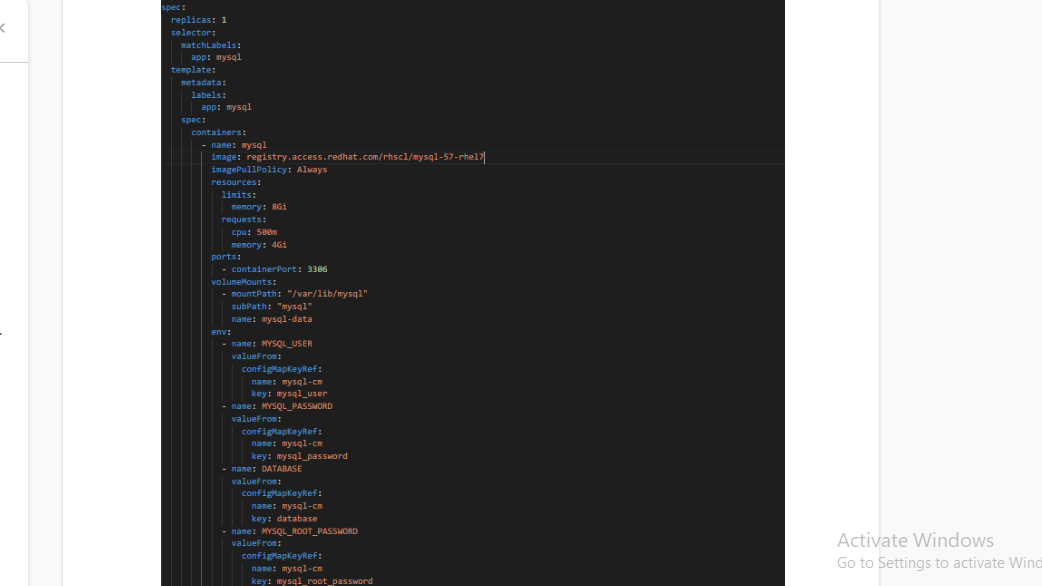

Configuring MySQL deployment for Orca:

We configure the MySQL deployment of Orca similar to Clouddriver but we are going to use a PVC to store the Database to avoid data loss. We do backup the MySQL DB to ensure we don’t incur any data loss.

The service, PVC and the config Map:

Configure the MySQL Database and Users for clouddriver

Once the pod is up and running, you need to execute the following commands:

Login via root as one needs to create the user via root :

# mysql -u root -p$MYSQL_PASSWORD -h $HOSTNAME $MYSQL_DATABASE

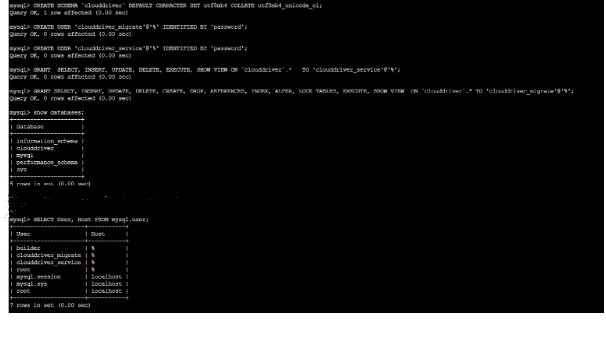

Once you are in the prompt, the first step is to create a Database called “clouddriver” and then create two users – the clouddriver_service & clouddriver_migrate which would get the permissions to access it.

# mysql> CREATE SCHEMA `clouddriver` DEFAULT CHARACTER SET utf8mb4 COLLATE utf8mb4_unicode_ci;

# mysql> CREATE USER ‘clouddriver_service’@’%’ IDENTIFIED BY ‘password’;

# mysql> CREATE USER ‘clouddriver_migration’@’%’ IDENTIFIED BY ‘password’;

# mysql> GRANT SELECT, INSERT, UPDATE, DELETE, EXECUTE, SHOW VIEW ON `clouddriver`.* TO ‘clouddriver_service’@’%’;

# mysql> GRANT SELECT, INSERT, UPDATE, DELETE, CREATE, DROP, REFERENCES, INDEX, ALTER, LOCK TABLES, EXECUTE, SHOW VIEW ON `clouddriver`.* TO ‘clouddriver_migrate’@’%’;

You can see that the user’s clouddriver-service & clouddriver_migrate have been created with their passwords. You can view the Database “clouddriver” being configured.

Configure the MySQL Database and Users for Orca:

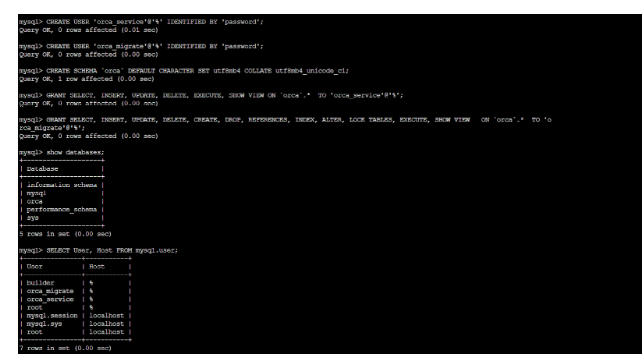

# mysql> CREATE SCHEMA `orca` DEFAULT CHARACTER SET utf8mb4 COLLATE utf8mb4_unicode_ci;

# mysql> CREATE USER ‘orca_service’@’%’ IDENTIFIED BY ‘password’;

# mysql> CREATE USER ‘orca_migration’@’%’ IDENTIFIED BY ‘password’;

# mysql> GRANT SELECT, INSERT, UPDATE, DELETE, EXECUTE, SHOW VIEW ON `orca`.* TO ‘orca_service’@’%’;

# mysql> GRANT SELECT, INSERT, UPDATE, DELETE, CREATE, DROP, REFERENCES, INDEX, ALTER, LOCK TABLES, EXECUTE, SHOW VIEW ON `orca`.* TO ‘orca_migrate’@’%’;

You can see that the users orca_service & orca_migrate has been created with their passwords. Also, you can view the Database “orca” being also configured

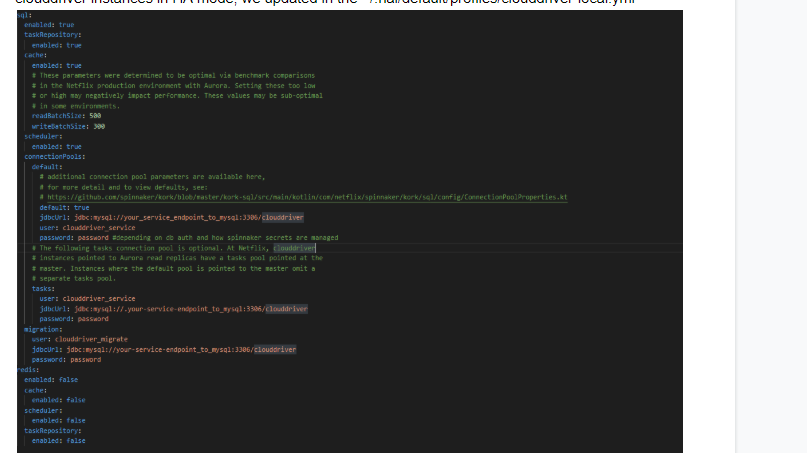

Configuring the clouddriver-local.yml to point to MySQL from Redis:

Once you have configured the MySQL Database and the users we need to edit the clouddriver-local.yml to point to MySQL and switch over from Redis. Since we had running clouddriver instances in HA mode, we updated in the ~/.hal/default/profiles/clouddriver-local.ym

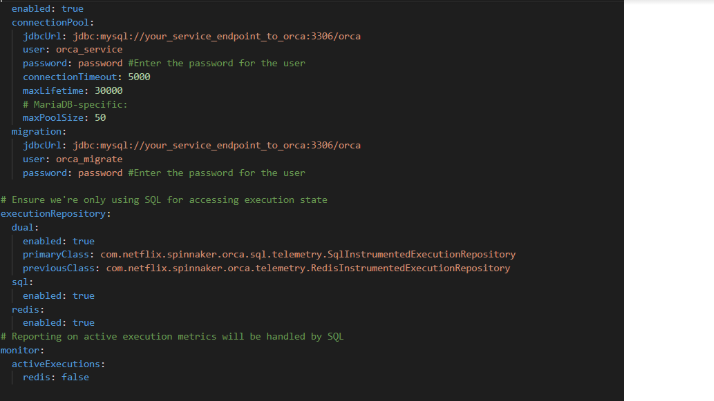

Configuring the orca-local.yml to apply Dual Execution Repository:

Once you have configured the MySQL Database and the users we need to edit the orca-local.yml to point to both MySQL and Redis as we are using Dual Execution Repository. So, all the newer executions would go to MySQL and all historical executions can be retrieved from Redis.

The next step similar to clouddriver, for orca we updated in the ~/.hal/default/profiles/orca-local.yml

Once you have configured the clouddriver-local.yml and orca-local.yml you need to do a “hal deploy apply” with the service names of clouddriver and orca.

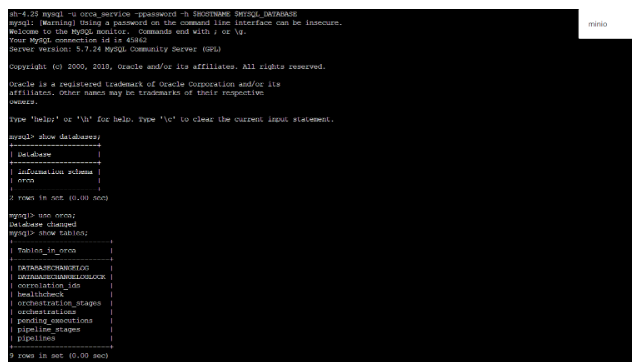

Verification that new pipelines are going to the MySQL:

Login into the MySQL orca pod and then in the terminal you verify that on the pipelines tables on the orca DB we have the recent 9 pipelines we had triggered:

Conclusion:

We have shown how to configure mysql backend for Orca and Clouddriver. As you can see it is fairly simple. Stay tuned on how to migrate existing DB in Redis for Orca to mysql with no downtime.

0 Comments